Block AI Crawlers? When It Helps and When It Hurts

- What counts as an AI crawler, exactly?

- The upside of allowing AI crawlers

- The downside of leaving the door wide open

- Who should generally allow AI crawlers, and who should limit them?

- Quick decision table

- How to implement a split policy the right way

- Making the most of AI visibility when you do allow

- Measurement and monitoring

- Real-world recommendations by scenario

- Common myths to ignore

- The Altimizo take

- References and further reading

If you have spotted mysterious visitors in your server logs at 2 a.m., sipping your bandwidth and leaving no thank-you note, you have met AI crawlers. Should you block them? The short answer is, sometimes. The smarter answer is, let us weigh AI visibility and brand lift against intellectual property risk and revenue leakage.

What counts as an AI crawler, exactly?

Three broad groups knock on your door:

- Training crawlers, bots that ingest web pages to train large models. Examples include GPTBot, CCBot from Common Crawl, and opt-out tokens like Google-Extended and Applebot-Extended.

- Answer crawlers, fetchers used by assistants and search engines to assemble citations in responses, for example Bing Copilot or Perplexity when browsing. These may send referral traffic, or at least brand mentions.

- Unruly scrapers, imitators that ignore robots.txt, repackage your content, and do not cite you. Robots rules help with ethical bots, not with these.

If your goal is to earn customers, not just pageviews, there are situations where being visible in AI answers pays off. There are also very real reasons to limit access.

The upside of allowing AI crawlers

- AI visibility, assistants increasingly summarize the web for users. If your content is in their training or browsing set, you are more likely to be cited or described in those answers. That creates brand lift and, in some cases, referral traffic.

- AI traffic, some assistants and generative search experiences include clickable citations. Perplexity, Bing Copilot, and Google’s experimental experiences have all surfaced sources with links at various times. You will not get every click, but you can earn some high-intent ones.

- Authority signaling, clean, well-structured content can be disproportionately favored when assistants look for dependable sources. That can compound your brand’s perceived expertise.

The downside of leaving the door wide open

- Intellectual property risk, your unique content can be ingested and paraphrased by models. That can dilute your competitive edge or undercut monetization. If you sell content, this risk is not theoretical.

- Traffic cannibalization, for some informational queries, assistants answer the question directly. Great for users, not great for your session count.

- Compliance and context risks, regulated or sensitive content can be quoted without the nuance you intended, creating brand or legal problems.

Who should generally allow AI crawlers, and who should limit them?

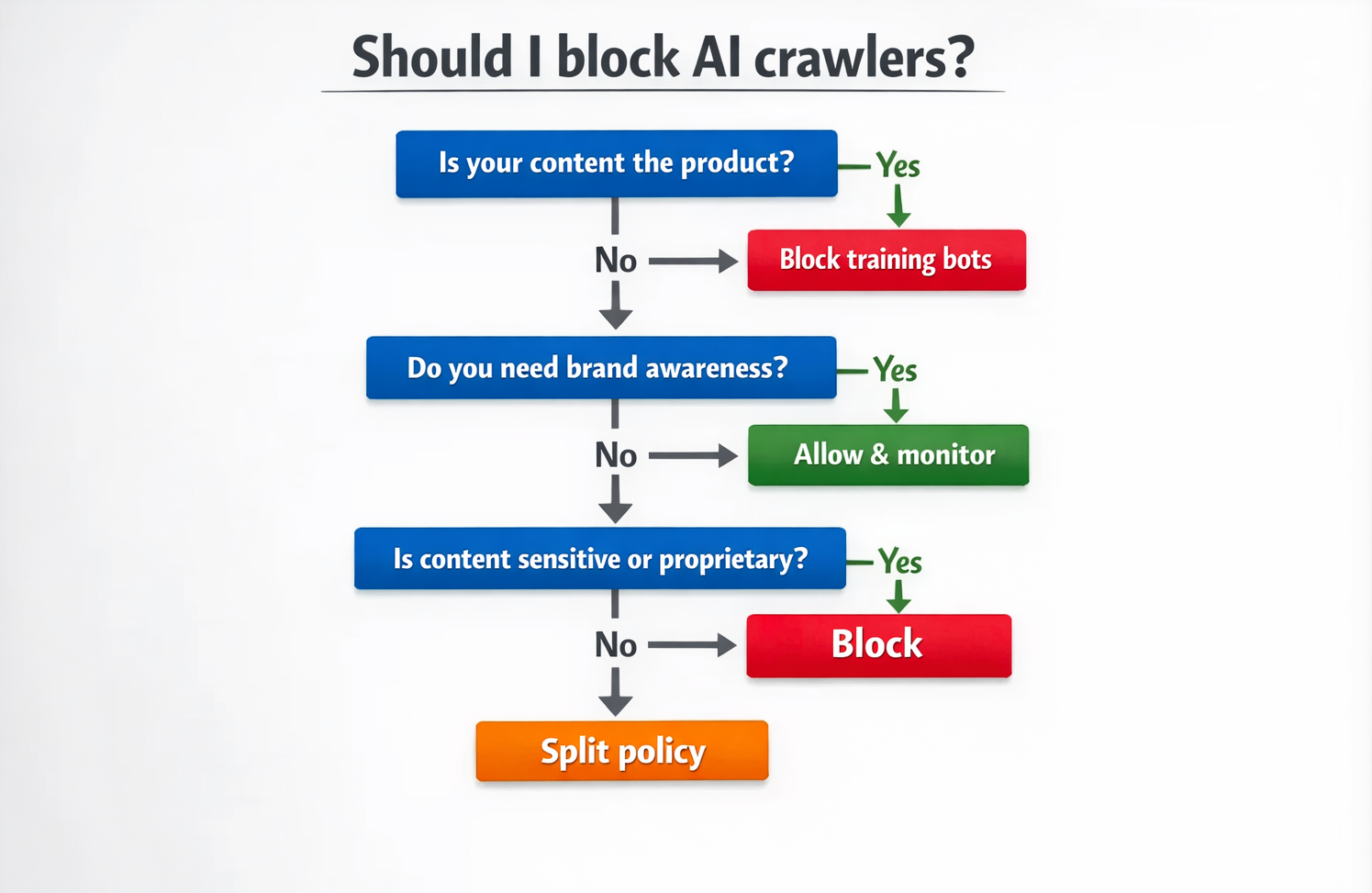

Here is a pragmatic way to decide:

Usually allow and optimize for AI visibility

- Local service businesses, plumbers, dentists, law firms, home services. The upside of being mentioned in AI answers for “near me” or problem-based searches outweighs the risk. You want your NAP details, service areas, and reviews reflected wherever users look.

- E-commerce with commodity products, if you compete on breadth, price, or availability, exposure in assistants can drive incremental discovery. Keep your PDPs structured and current.

- SaaS and developer tools with public docs, docs and how-to content benefit from citations in AI answers. If adoption and usage are your growth loops, visibility is gold.

- Early-stage brands hungry for awareness, if no one knows you exist, some content risk is acceptable to gain mindshare.

Recommendations when you allow:

- Lean into structured data, implement Schema.org for products, local business, articles. Assistants and search systems use it to understand and cite your content.

- Publish summary-first content, give a crisp, quotable answer near the top, then invite deeper reading with differentiators and CTAs.

- Track brand mentions in AI surfaces, spot-check key queries weekly and log which assistants cite you. Adjust headings and summaries to improve citation odds.

Usually limit or take a split approach

- Publishers and content-driven businesses, ad-supported sites, recipe sites, magazines, research publishers, and course creators. Your content is the product. Blocking training crawlers is often sensible.

- Proprietary data and paywalled content, internal benchmarks, pricing, or gated analyses should not train general models.

- Regulated and sensitive categories, finance, health, legal. The risk of out-of-context summaries is non-trivial. Keep tight control.

Recommendations when you limit:

- Use a split policy, allow AI access to marketing pages and press releases, block it from premium, gated, and members-only paths.

- Tighten your TOS, explicitly disallow data mining or model training without permission. It is not a forcefield, but it strengthens your position.

- Consider partial excerpts, show teasers publicly and move the unique value behind authentication.

Quick decision table

| Business type | Default stance | Why |

|---|---|---|

| Local services and SMB lead gen | Allow, with monitoring | AI mentions drive discovery and calls. |

| E-commerce, commodity catalog | Allow, protect proprietary data | Extra visibility on generic queries is useful. |

| SaaS with public docs | Allow, structure heavily | Docs cited in answers drive adoption. |

| News, magazines, recipe sites | Block training bots, allow search bots | Content is monetized, protect IP. |

| Course creators and research firms | Block training bots, gate premium | Content is the product. |

| Regulated verticals with sensitive info | Split or block | Reduce compliance and misquote risk. |

How to implement a split policy the right way

Robots.txt is table stakes for ethical bots. It is not a security control, so pair it with server rules for anything sensitive.

1) Start with robots.txt controls

- Robots 101 from Google explains the standard and precedence rules. See Google’s documentation on robots.txt basics at Google Search Central.

- Major AI-related user-agents or opt-out tokens you can control include:

| Agent or token | Purpose | Notes |

|---|---|---|

| GPTBot | OpenAI training crawler | See OpenAI’s guidance on blocking at [OpenAI GPTBot docs](https://platform.openai.com/docs/gptbot). |

| CCBot | Common Crawl | Many models use Common Crawl. Docs at [Common Crawl](https://commoncrawl.org/ccbot). |

| Google-Extended | Opt-out control for some Google AI training uses | Announced by Google, see Google-Extended. Not a search crawler. |

| Applebot-Extended | Opt-out control for Apple’s AI training uses | Details under Applebot overview at [Apple Support](https://support.apple.com/en-us/HT204683). |

Example robots.txt that blocks common training agents but allows normal search crawlers like Googlebot and Bingbot:

# Allow standard search engines

User-agent: Googlebot

Disallow:

User-agent: Bingbot

Disallow:

# Block common AI training crawlers

User-agent: GPTBot

Disallow: /

User-agent: CCBot

Disallow: /

User-agent: Google-Extended

Disallow: /

User-agent: Applebot-Extended

Disallow: /

# Optional additions

User-agent: PerplexityBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

Notes:— User-agent strings evolve. Revisit quarterly and check your logs.— Robots.txt only governs compliant crawlers. Treat it as etiquette, not enforcement.

2) Block by path and authentication

- Put premium, members-only, and proprietary content behind login. Robots rules do not apply to authenticated areas.

- For public but sensitive sections, block by folder. Example, Disallow: /members/ or Disallow: /studies/.

3) Enforce at the edge

- Use your CDN or WAF to rate-limit or block suspicious user-agents and IPs that ignore robots.txt.

- Validate reverse DNS when possible and log aggressively. If a bot claims to be a well-known crawler but fails reverse DNS, deny.

4) Publish clear terms

Update Terms of Service to prohibit training use without permission. This does not stop bad actors, but it sets legal expectations.

Making the most of AI visibility when you do allow

You want your brand cited, not just copied. A few levers help:

- Structure your content, use Product, LocalBusiness, Article, and FAQ schema where appropriate. Keep NAP data consistent across pages.

- Answer-first formatting, start pages with a 2 to 3 sentence summary that states the what, who, and where. Then expand.

- Strengthen E-E-A-T signals, author bylines, credentials, last reviewed dates, and outbound citations to credible sources increase trust.

- Use unique assets, charts, local statistics, and original photos. Assistants look for distinctive, trustworthy material.

- Place clear calls to action, if an assistant quotes your intro, make sure the page itself quickly converts visitors who do click.

Measurement and monitoring

AI visibility is fuzzy, but you can still track progress.

- Query spot checks, maintain a living list of 30 to 50 high-intent queries. Weekly, ask a few major assistants and log whether you are cited, mentioned, or omitted.

- Referral patterns, look for spikes from assistants that pass referrers. Not all do, so pair this with brand search volume trends.

- Log analysis, identify bot user-agents over time to validate your allow or block policies. Watch for new agents.

- Content testing, publish one optimized explainer and one unstructured article on similar topics. If assistants cite the structured page more, you have your playbook.

Real-world recommendations by scenario

Common myths to ignore

The Altimizo take

For most small and local businesses, the net benefit of being visible in AI answers is positive. For content-as-product businesses and anyone with proprietary data, limit training access and use a split strategy. Revisit the decision quarterly, because both bots and policies change faster than your CMS template.

If you want help crafting a policy that fits your goals, we can audit your content, configure robots and edge rules, structure your pages for AI-friendly citations, and track the impact. Book a free consultation with our team at Altimizo.

References and further reading

- robots.txt basics and precedence rules at Google Search Central

- GPTBot allow and block details at OpenAI GPTBot docs

- CCBot information at Common Crawl

- Google-Extended announcement at Google’s blog

- Applebot and Applebot-Extended at Apple Support